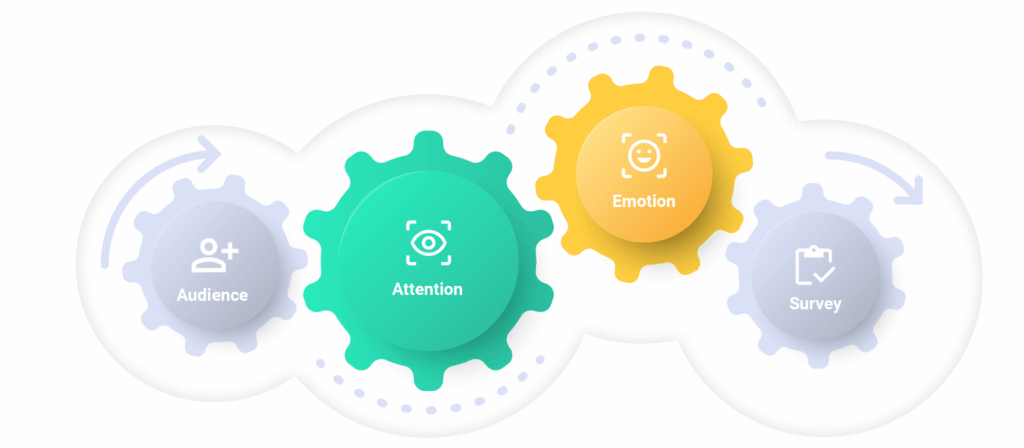

Test high value campaign creative with target audiences, using rich data sources including Attention, Emotional Reactions, Focus Heatmaps and Brand Lift surveys.

Compare your creative against deep market, category and platform benchmarks

View attention levels, emotional reactions, brand recognition & brand lift data

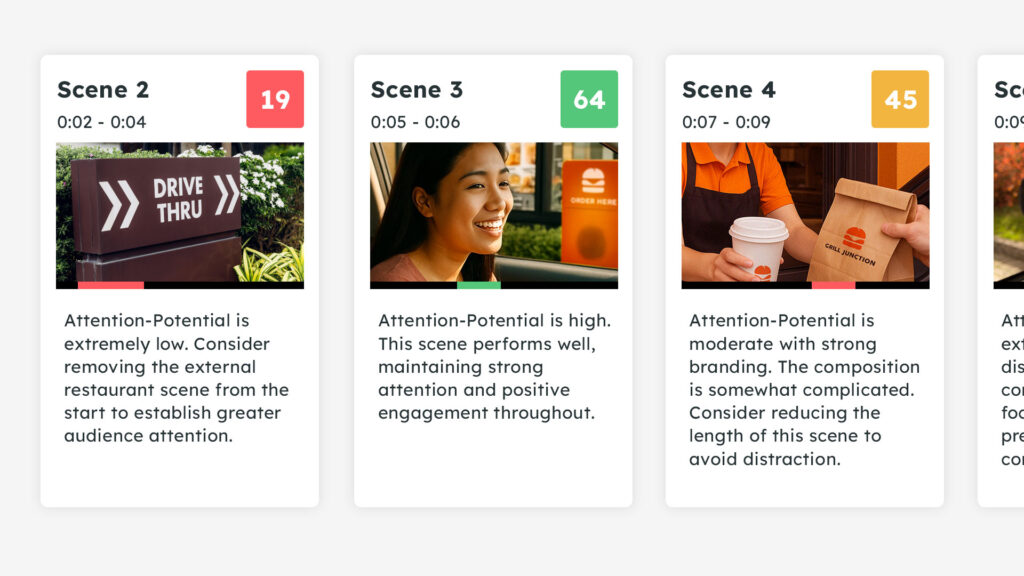

Scene by scene recommendations to improve resonance

Choose sample thresholds: age splits or generations and which media context they’ll view the content in. e.g. social media platform, TV, CTV, BVOD etc.

See how the creative breaks through and captures attention. Check whether attention is sustained through critical moments.

See how the audience responds to the creative, whether they react with surprise or positive or negative emotion.

See how the creative drives brand equity with brand recognition, ad likeability and persuasion questions.

A sample audience views your ads within desired platform context or a neutral video player to test TV or Audio creative.

Compare creative versions, sort by Brand, Category, Ad Format, Duration, Segment and more. Utilize metrics that customized to match KPIs and category benchmarks.

Simple traffic light thresholds: GO / FIX / NO GO for easy performance comparison to surface which ads are ready to launch, require an edit, or be replaced.

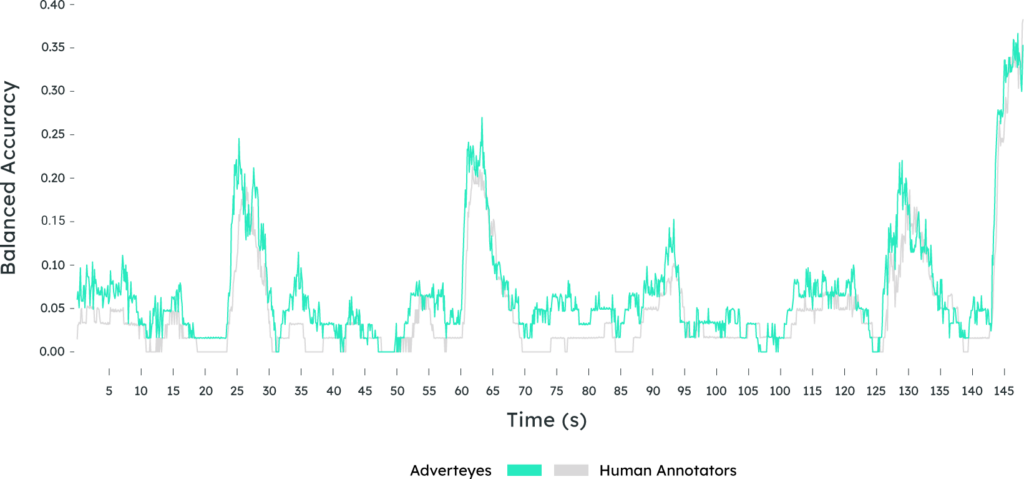

Diagnose audience response with second-by-second traces that pinpoint which scenes retain or lose engagement. See which moments trigger certain responses such as Happiness, Confusion or Negative reactions.

See frame-by-frame predicted focus of what draws audience attention.

Low performing key scenes have AI assisted suggestions to improve performance and effectiveness.

A lightweight survey experience for tracking brand recognition, message breakthrough, likeability and ad persuasion.

Brand Recognition

Ad

Recognition

Ad

Likeability

Using the front-facing camera of opted-in viewer attention levels and facial expressions are measured as they naturally experience your content on their own device – at home in the wild. Recognition and Likability multiple choice surveys are as standard.

Does the creative breakthrough and capture attention?

Does the creative resonate by achieving reactions?

Does the ad creative drive brand equity?

Our proprietary ad testing method enables respondents to view your creative as they normally would, at home on their own device. The experience is quick, simple and 100% GDPR compliant.

Realeyes is accredited with SOC2 compliance to provide the assurance in the confidentiality, integrity, and availability of customer data, with robust controls and safeguards to protect any sensitive information.

Pre-Exposure Survey

Target ad in interactive simulated platforms

Attention measurement

Brand recognition

(multi-select)

Ad recognition

(multi-select)

Target ad exposure in neutral video player

Reaction Measurement

Ad likeablity and brand trust ratings

Ad pursuasion

(single select)

Optional audience

(max 3 questions)

Attention measurement works best when creative and media are viewed together. In Context testing enables advertisers to assess how their sample audience respond to their creative within a specific social media environment, including TV, CTV, BVOD or audio only.

YouTube

A fully dynamic YouTube environment including: Skippable Preroll / Non-Skippable Preroll / Bumper Ad / Shorts Video Ad

TikTok

Vertical fullscreen ads between authentic TikTok content including: Infeed Video & TopView Video Ad

Newsfeed, Stories and Reels ads within an authentic app experience.

Test Newsfeed, Stories and Instream Midroll ads between live Facebook content.

SnapChat

X

The most advanced, patented and award winning attention measurement technology, PreView uses the front-facing camera of opted-in participants as they experience your ads at home on their own device

15 pending, innovation is at the core

High performance global data collection

Biggest culturally sensitive AI training set in the world

Our AI recognizes human attention and emotional expression in the same way that people do, by separating background from the foreground, the ability to focus and detect the presence of a face and its countenance.

Facial coding picks up the same subtle behavioral cues that the human brain instinctively processes to gauge someone’s attention to stimuli, and any changes in their expression.

We use ‘in the wild’ datasets, employing machine learning that teach our algorithms to cope with complexities such as poor lighting, heavy shadows, thick facial hair, spectacles, and other occlusions that would typically make attention and expression tracking more challenging.

For each video frame, the AI instantly interprets the information like a human: detecting the existence of a face, separating it from the background, with the ability to focus on facial features, tracking the 3D head position, eye position, eyelids (palpebral aperture) and the shape of its expression.

Additionally, we create a person-dependent baseline, or mean face shape. By measuring expressions as they deviate from a ‘neutral’ face, this accounts for people who naturally look more positive or negative to tolerate any bias.

Realeyes uses a proprietary Convolutional Neural Network (CNN), a type of deep neural network specifically designed for processing and analyzing images and videos. Data is processed in the cloud in three steps.

AI detects face presence by the existence of facial features – not their identity

AI isolates and extracts the facial features from each camera frame

AI uses facial landmarks to track the position and movement of attention and expression

We define attention as the awareness to a stimulus while ignoring other stimuli. Here we quantify the focus or interest by tracking head pose, face direction, eye lid openness, and gaze direction in response to stimulus (ad content).

Eyes focused or fixated on a stimulus is interpreted as full attention, whereas closed eyes, looking away, or obscuring the eyes (e.g. with hands) indicates the absence of attention, often described as distraction.

When a person is attentive, their head position tends to be aligned with the stimulus (the target of their attention). Turning left, right, up or down, moving the head position away so that eyes no longer see the stimuli indicates negative attention.

In addition to eye movement and head pose, when individuals are attentive, their facial expressions, may exhibit specific patterns attributed to emotional engagement: Happiness, Surprise, Sadness, Confusion or Concentration for example.

These are the movement and configurations of facial muscles, including the movements of the eyebrows, eyes, nose, mouth and cheeks.

Every day, people rely on facial expressions to communicate how they think and feel to others. This kind of non-verbal communication is a natural part of our behavior.

Our patented technology recognizes and interprets human expression through their facial cues. These facial expressions are classified into metrics based on the specific muscle movements and configurations of facial features.

A smile is formed from the cheeks rising and the corners of the mouth pulling up respectively.

A surprised expression is formed from a combination of raised eyebrows, eyes wide open (raised eyelids) and the jaw dropping to reveal an open mouth.

A frown is formed with the lowering of the brow, a raising and narrowing of the eyelids, and a tightening of the lips.

Disgust is expressed by the nose wrinkling, a downturn of the lower lip and the corners of the mouth moving downwards.

This expression is characterized by raised eyebrows, widened eyes, nose wrinkling, a tensed mouth and elevated cheeks resembling a startled appearance.

This expression is formed by a slight curling of the lip corners, often accompanied by a tightening of the jaw muscles.

This expression is characterized by a downturned mouth, furrowed, or lowered eyebrows, narrowed eyes and tensed cheeks to appear slightly raised.

The training data for our models comes from our proprietary dataset, collected from over 12+ years of developing facial coding models. Camera recordings are collected across different countries, age groups and sexes, to achieve fair and balanced representation globally.

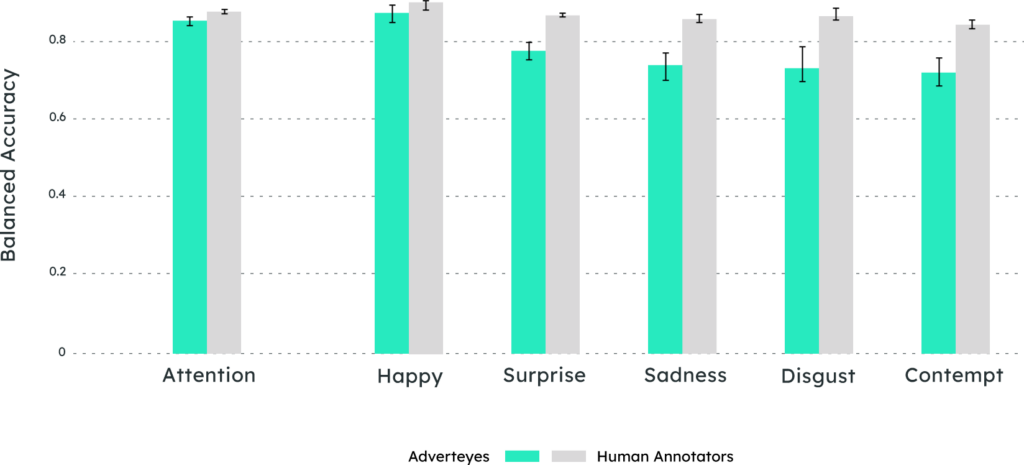

Those recordings are annotated for attention and facial expressions by a large pool of qualified psychologists and annotators. Employing a “wisdom of the crowds” approach, video frames are only labelled when 3 to 7 annotators reach a majority agreement on each specific frame. As an additional step, annotated labels then go through a standardized quality assurance review.

...recordings are annotated for attention and facial expressions by a large pool of qualified psychologists and annotators.

Subscribe to our low frequency news letter to get our latests updates delivered to your inbox